Did Cursor really steal Kimi???

Theo (t3.gg) breaks down how Cursor’s Composer 2 is a heavily post-trained Kimi K2.5, raising open-weight licensing and disclosure concerns.

- Cursor’s Composer 2 is built on Kimi K2.5 (Moonshot AI), with 3x the original model’s compute spent on post-training RL using Cursor’s proprietary chat history data.

- Anthropic’s $200/month Claude Code plan now delivers ~$5,000 in compute, a 25x subsidy that makes it nearly impossible for Cursor to profit on resold inference.

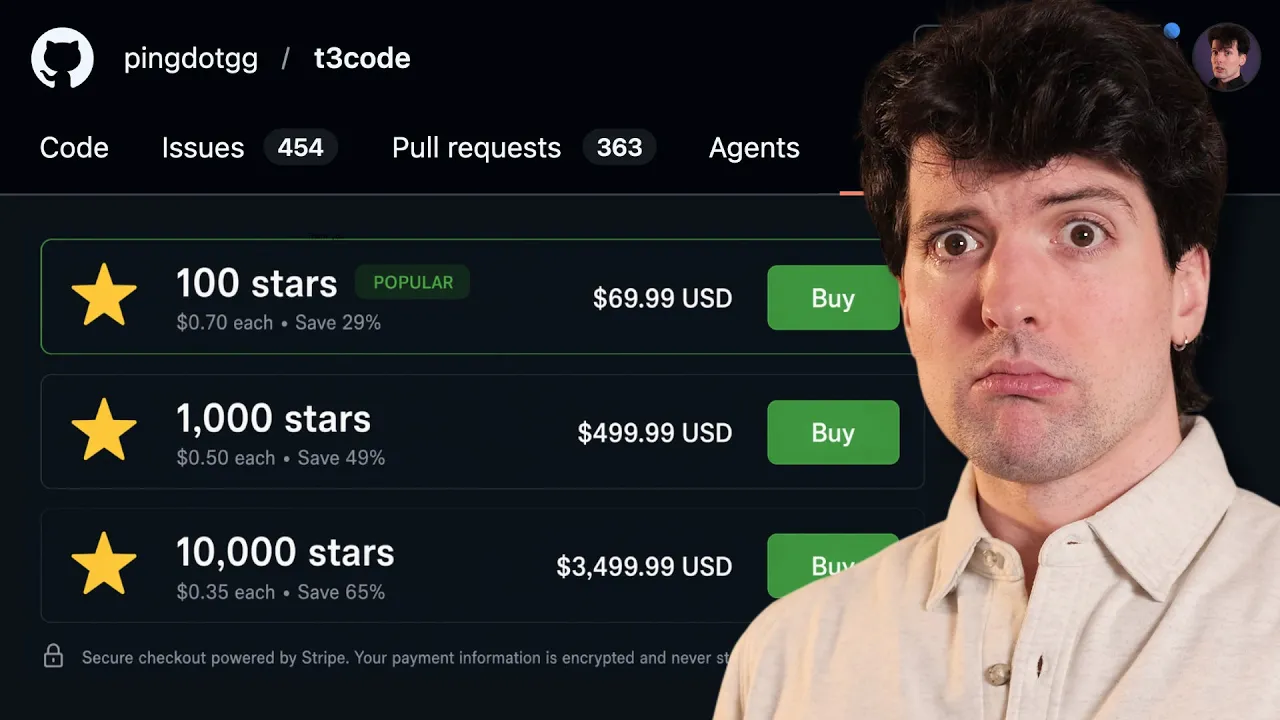

- Cursor routed training and inference through Fireworks AI, using that middleman relationship to technically satisfy Kimi’s modified MIT license without displaying ‘Kimi K2.5’ in their UI.

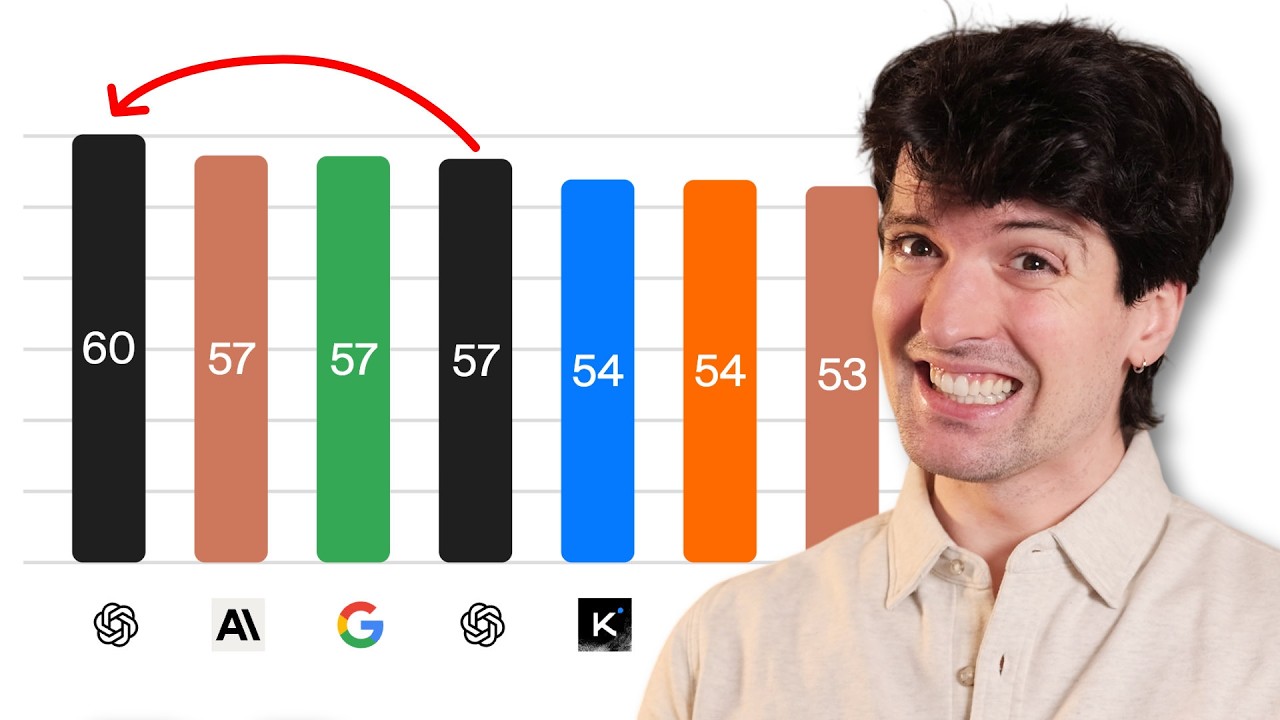

- Composer 2 costs $0.50/million tokens in and $2.50/million out — exactly 10x cheaper than Claude Opus 4.6 — while matching or beating Opus on coding benchmarks including Terminal Bench.

- The undisclosed base model was discovered by a third party finding ‘kimi-k2.5-rl-0317’ in Cursor’s API URL structure, not from any Cursor announcement.

- A Kimi/Moonshot employee publicly expressed confusion, indicating the partnership was not widely known internally at Moonshot before launch.

- Theo warns this incident may push open-weight model labs to stop releasing weights, threatening the competitive pressure that has driven frontier model cost reductions.

- Cursor’s leverage over Anthropic comes from chat history data — estimated at hundreds of millions of exchanges — used for RL post-training, dwarfing the 150,000 exchanges Anthropic accused DeepSeek of stealing.

2026-03-22 · Watch on YouTube