I don’t really like GPT-5.5…

Theo (t3.gg) gives a mixed review of GPT-5.5, praising its raw capability while criticizing its laziness, context-stickiness, and steep price hike.

- GPT-5.5 costs $5/M tokens in and $30/M tokens out — 2x GPT-5.4 and ~20% more than Claude Opus 4.7.

- Token efficiency is the key justification: 5.5 x-high used 75M tokens vs 5.4’s ~150M on the same benchmark.

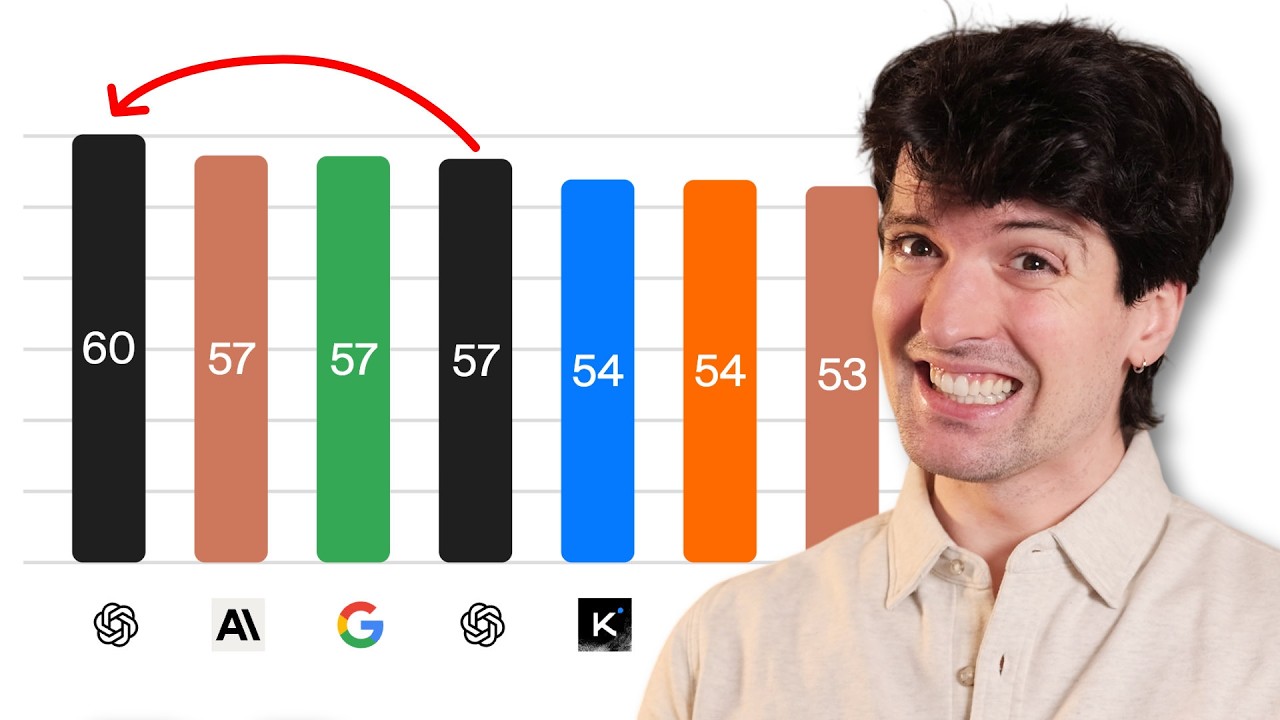

- GPT-5.5 medium scores nearly identically to 5.4 x-high on the AI intelligence index, at roughly the same cost.

- Trained and shipped on Nvidia GB200 NVL72 systems; no official public API at launch — only Codex endpoints.

- Pro model solved three unsolved DEF CON cipher puzzles (5–10 years unsolved); one took 163 minutes in ChatGPT.

- Core complaint: once wrong information enters the context window, the model cannot be prompted out of it — requires killing the thread.

- Theo recommends using lower reasoning tiers (low/medium) by default, writing more explicit upfront prompts, and starting fresh threads aggressively.

2026-04-24 · Watch on YouTube