OpenAI is lying

Theo (t3.gg) calls OpenAI’s GPT-5.4 frontend showcase a lie, showing Kimi K2.5 and Opus produce dramatically better UI at a fraction of the cost.

- GPT-5.4 defaults to card-heavy layouts on every generation; OpenAI’s own design skill says “no cards” 13 times and the model ignores it every time.

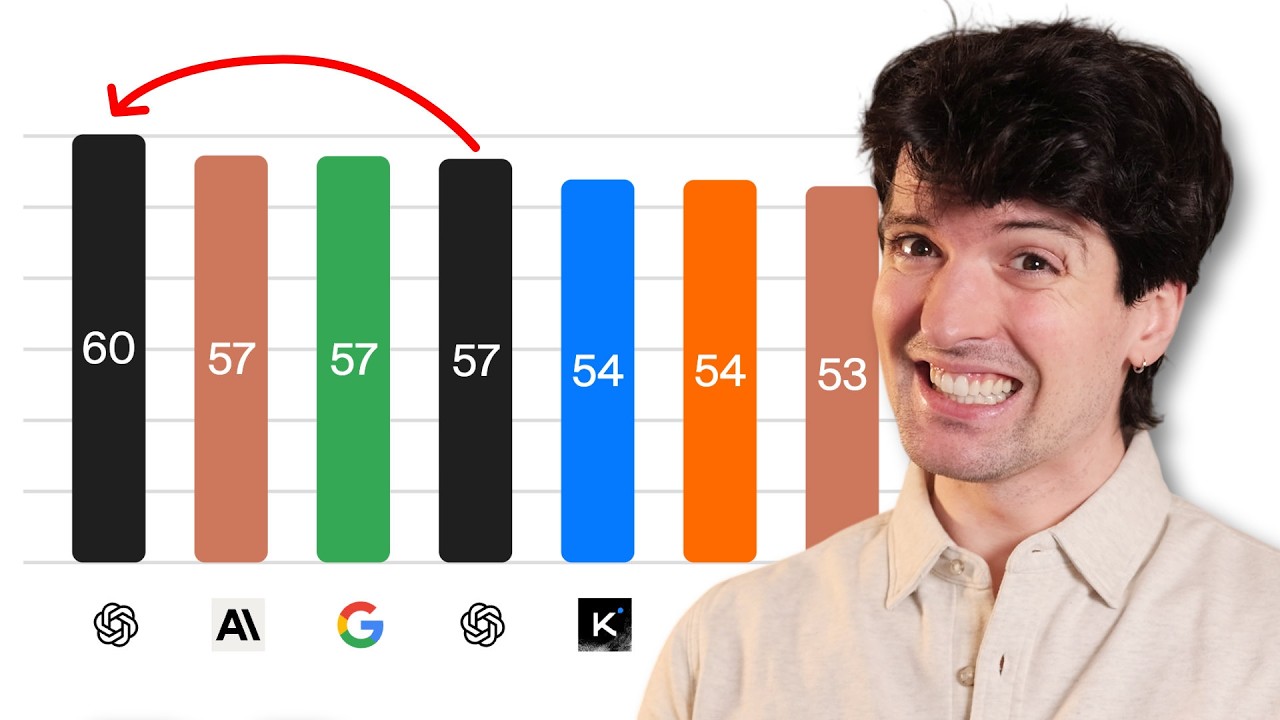

- Kimi K2.5, an open-weight model priced at roughly one-tenth of GPT-5.4, consistently outperforms it on UI variety and quality in a community benchmark.

- Theo estimates GPT-5.3/5.4 draws from ~4 UI “templates” in training; Opus has ~10 better ones; Gemini 3.1 has ~15 with more variance.

- Theo’s theory: Anthropic and Google share a higher-quality UI training dataset that OpenAI either didn’t buy or didn’t update, despite GPT-5.4’s alleged August 2025 training cutoff.

- The OpenAI blog post examples use identical left-text/right-image layouts with the same three-item nav across all designs, undermining the “delightful” claim.

- Theo’s design stack: Opus for most work, Gemini 3.1 Pro for variety (requires 5+ retries), GPT occasionally for CSS bug cleanup only.

- Theo speculates the article exists because of an internal OpenAI mandate to fix the frontend problem, with devrel executing what engineers couldn’t fix at the model level.

2026-03-26 · Watch on YouTube