Why GPT-4 is much smarter than it was a year ago – OpenAI cofounder John Schulman

Watch on YouTube ↗ Summary based on the YouTube transcript and episode description.

OpenAI cofounder John Schulman explains that GPT-4’s 100-point ELO gain over its original release is mostly attributable to post-training improvements, not pre-training scale.

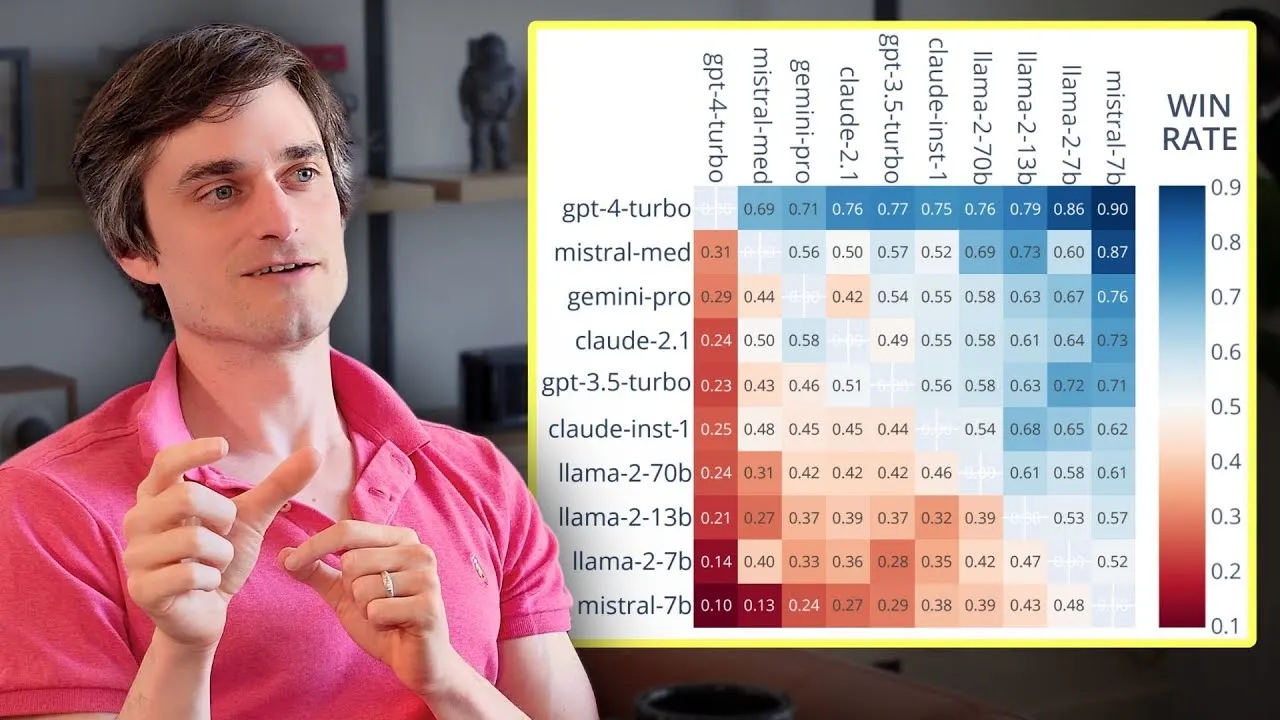

- Current GPT-4 has an ELO score ~100 points higher than the original release; Schulman attributes most of that gain to post-training.

- Post-training compute ratio is still heavily lopsided toward pre-training, but Schulman expects that to shift as model outputs exceed average web quality.

- Post-training moat is real but partial: it requires accumulated tacit and organizational R&D knowledge that is hard to spin up quickly.

- Smaller players likely distill or clone outputs from frontier models to bootstrap post-training, violating ToS; larger labs don’t for policy and pride reasons.

- Key levers stacking up in post-training: data quality, data quantity, iteration cycles, and changing annotation types.

- Good post-training researchers combine empirical experimentation with first-principles reasoning about what ideal training data should look like.

- Schulman’s own edge comes from spanning the full stack: RL algorithms, data collection, annotation, and language model behavior.

2024-05-16 · Watch on YouTube