Jensen Huang – Will Nvidia’s moat persist?

Jensen Huang argues Nvidia’s moat is supply chain lock-in, CUDA install base, and algorithmic flexibility — not just chip specs — while defending China chip sales as essential to US tech leadership.

- Blackwell is 50x more energy-efficient than Hopper — far beyond what Moore’s law alone (~75% per 3-year node) could explain; the gap comes from algorithms and system architecture.

- Nvidia holds ~$100B in explicit purchase commitments upstream (TSMC, HBM, packaging); Semi Analysis estimates $250B total, effectively locking out competitors from supply.

- 60% of TSMC’s N3 capacity goes to AI this year, rising to 86% next year — Jensen says supply bottlenecks resolve in 2–3 years once demand signal is clear, with energy policy being the harder constraint.

- Jensen’s case against China chip export controls: 50% of AI developers are in China; conceding that market accelerates Huawei’s ecosystem and removes CUDA lock-in from Chinese researchers.

- Huawei 910C has roughly half the flops and memory bandwidth of H200 — Jensen says they compensate by deploying twice as many chips, but Nvidia’s architecture advantage compounds over time.

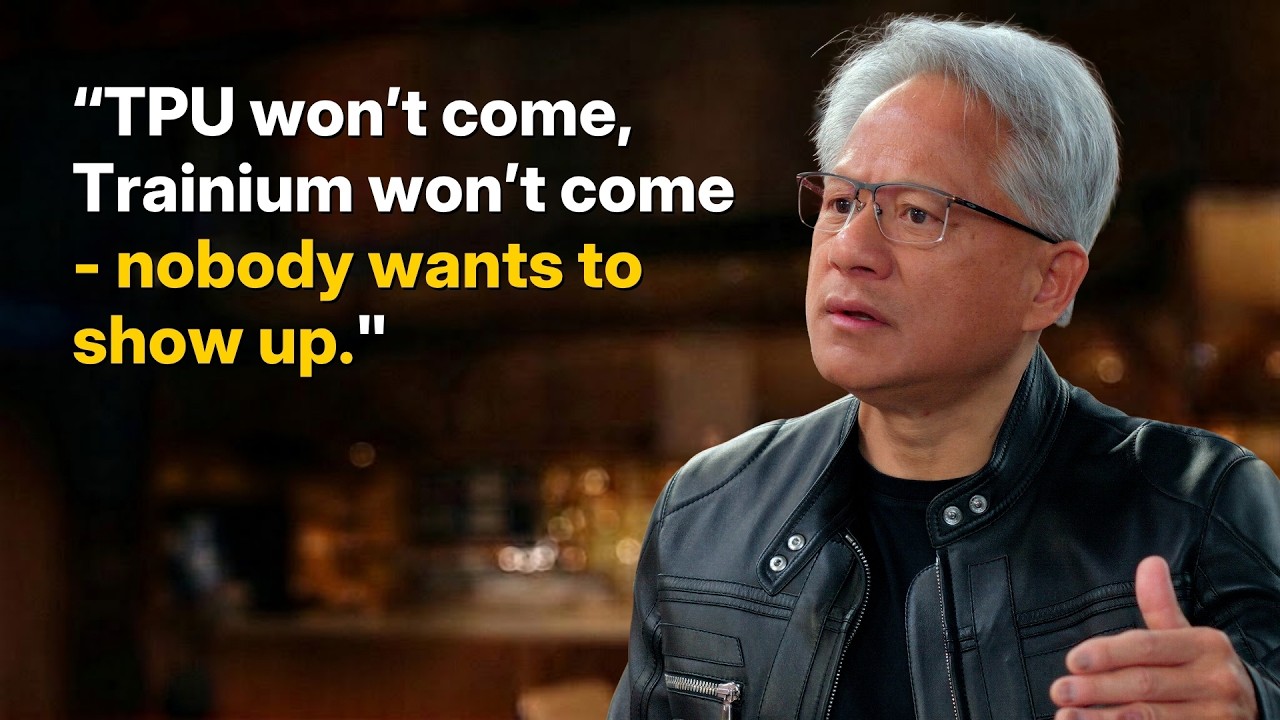

- TPUs trained two of the top three frontier models (Claude, Gemini), but Jensen argues GPU programmability enables new architectures (hybrid SSMs, diffusion-AR fusion) that fixed systolic arrays cannot.

- Nvidia acquired Groq and plans to integrate it into the CUDA ecosystem to serve a new high-ASP, low-latency inference segment where response time justifies premium token pricing.

2026-04-15 · Watch on YouTube