How Gwern saw AI scaling coming

Watch on YouTube ↗ Summary based on the YouTube transcript and episode description.

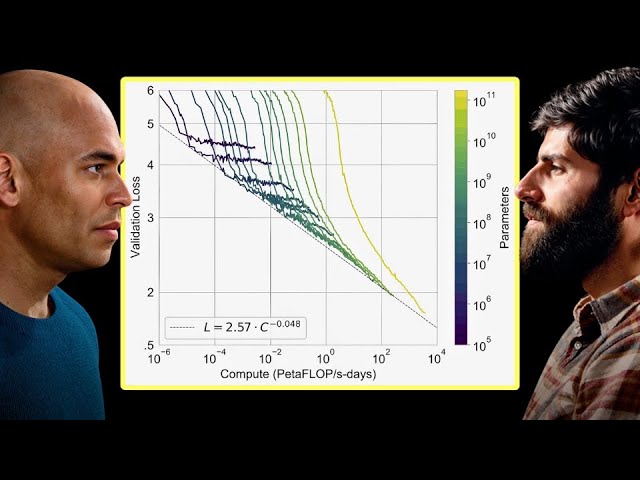

Gwern Branwen explains the empirical reasoning that led him to predict AI scaling laws years before most researchers accepted them.

- Gwern initially dismissed Kurzweil/Moravec connectionist arguments as magical thinking — compute alone can’t summon algorithms.

- Shane Legg’s blog extrapolations (generalist AI by 2019, human-level agents by 2025, AGI by 2030) kept him watching the trend.

- AlexNet was the first signal: a concrete win for connectionism, not just theory.

- He tracked arXiv daily and noticed datasets, model sizes, and GPU counts kept expanding with no ceiling in sight.

- GPT-1’s unsupervised sentiment neuron was a compute-first result — no clever algorithm, just scale.

- GPT-2 was his first holy-shit moment; GPT-3 was the decisive test — the largest neural net scale-up in history at that point.

- GPT-3’s few-shot learning chart confirmed scaling; the mainstream response dismissing it as not state-of-the-art made him write up the scaling hypothesis publicly.

- Key early believers he tracked: Ilya Sutskever, Schmidhuber, Legg — a handful of people whose world kept being proven right.

2024-11-19 · Watch on YouTube