Energy, not compute, will be the #1 bottleneck to AI progress – Mark Zuckerberg

Watch on YouTube ↗ Summary based on the YouTube transcript and episode description.

Mark Zuckerberg argues energy permitting and grid capacity — not compute or capital — will be the binding constraint on frontier AI training.

- No single gigawatt training cluster has been built yet; most large data centers run 50–150 megawatts.

- Energy permitting is a multi-year government-regulated process, making rapid scaling structurally hard.

- Building transmission lines across private/public land adds further regulatory delay on top of power plant approvals.

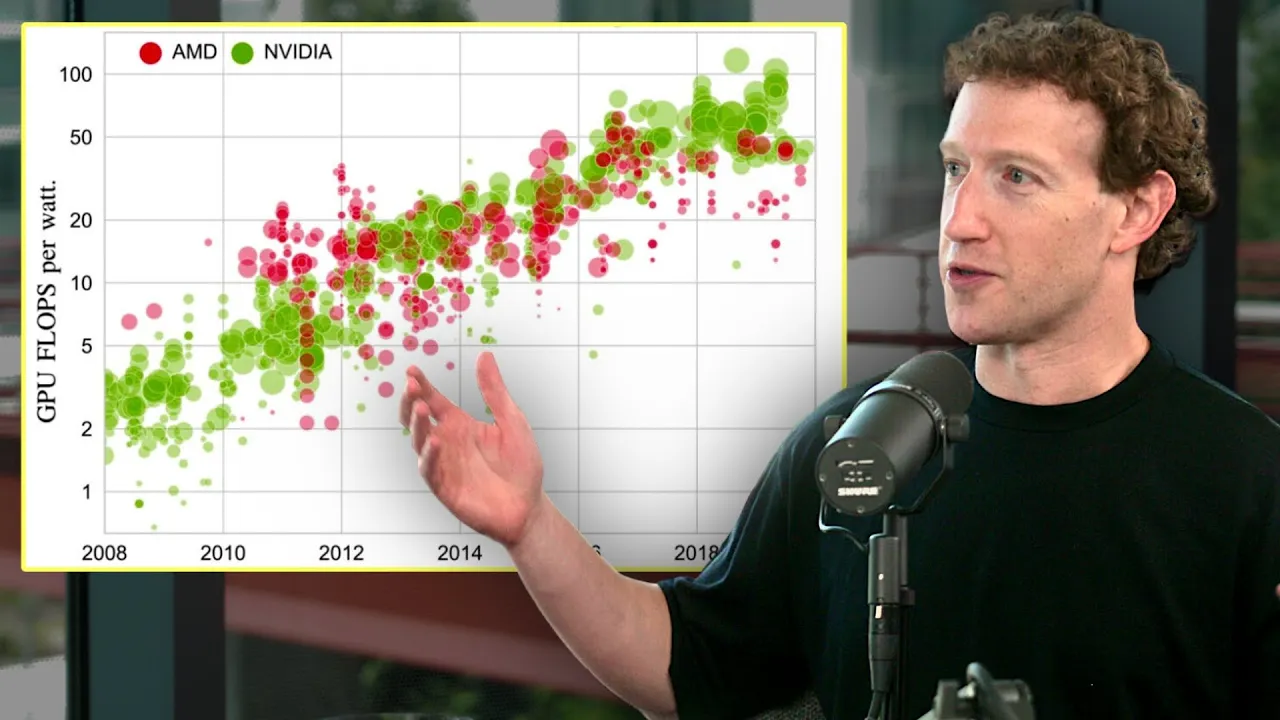

- GPU supply constraints that dominated recent years are easing; energy is the new bottleneck.

- Zuckerberg believes $1T+ in capital would still be time-constrained by energy availability, not money.

- 300 MW, 500 MW, and gigawatt-scale data centers are theoretically next but none exist yet.

- Scaling curves may continue, but Zuckerberg warns no one in the industry can guarantee exponential progress holds.

2024-04-21 · Watch on YouTube