Can synthetic data unlock AI recursive self-improvement? — Mark Zuckerberg

Watch on YouTube ↗ Summary based on the YouTube transcript and episode description.

Mark Zuckerberg argues synthetic data is really inference, not training, and that recursive self-improvement is bounded by model architecture and physical constraints.

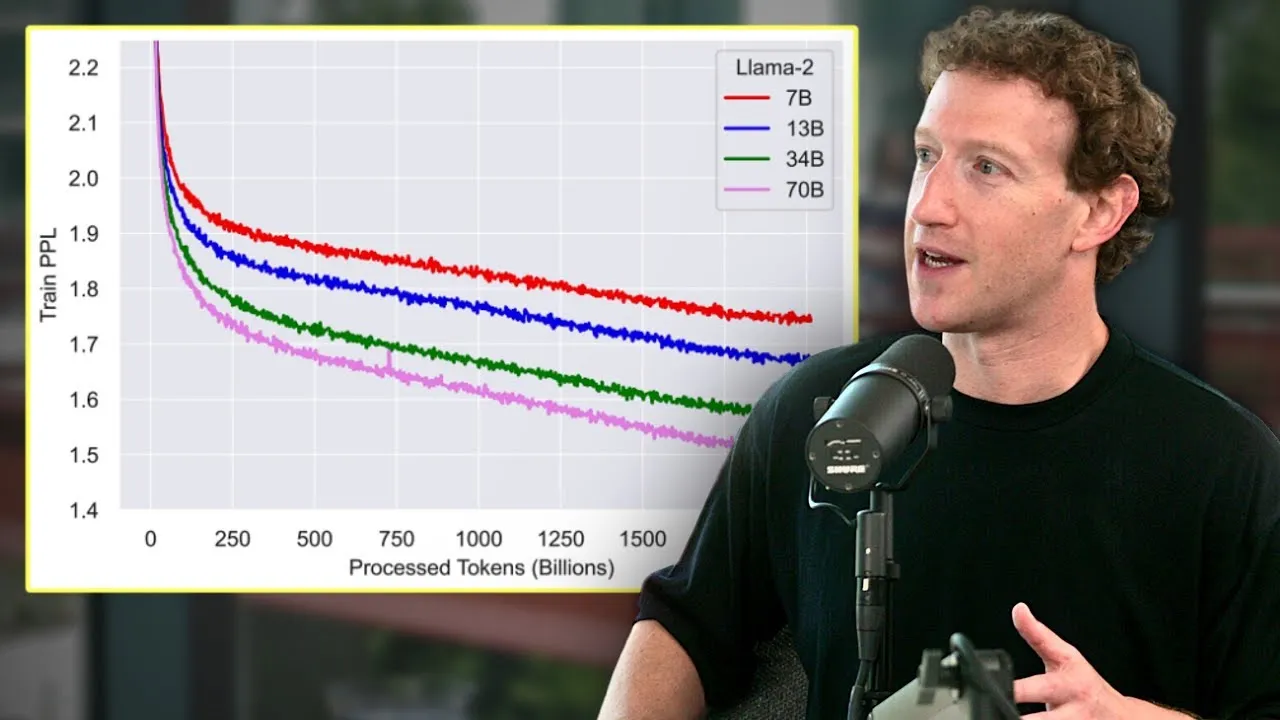

- Llama 3 70B trained on ~15 trillion tokens and was still learning at cutoff — Zuckerberg believes they could have fed it more and seen gains.

- Future large-model training will increasingly blur into inference: generating synthetic data is more inference than training, but feeds back into the training loop.

- Open-sourced Llama creates a real scenario where compute-rich states (Kuwait, UAE) could use it to bootstrap significantly smarter models.

- Recursive self-improvement via synthetic data is real but bounded: an 8B model cannot be bootstrapped to match a state-of-the-art multi-hundred-billion-parameter model.

- Physical constraints — energy availability and chip capacity — set a hard ceiling on model size and inference scale.

- Zuckerberg is optimistic on continued fast improvement but explicitly rejects the runaway-AI scenario as unlikely.

- He flags two competing geopolitical risks: open-sourcing helps China close the AI gap; not open-sourcing risks one actor gaining totalitarian power.

2024-04-22 · Watch on YouTube