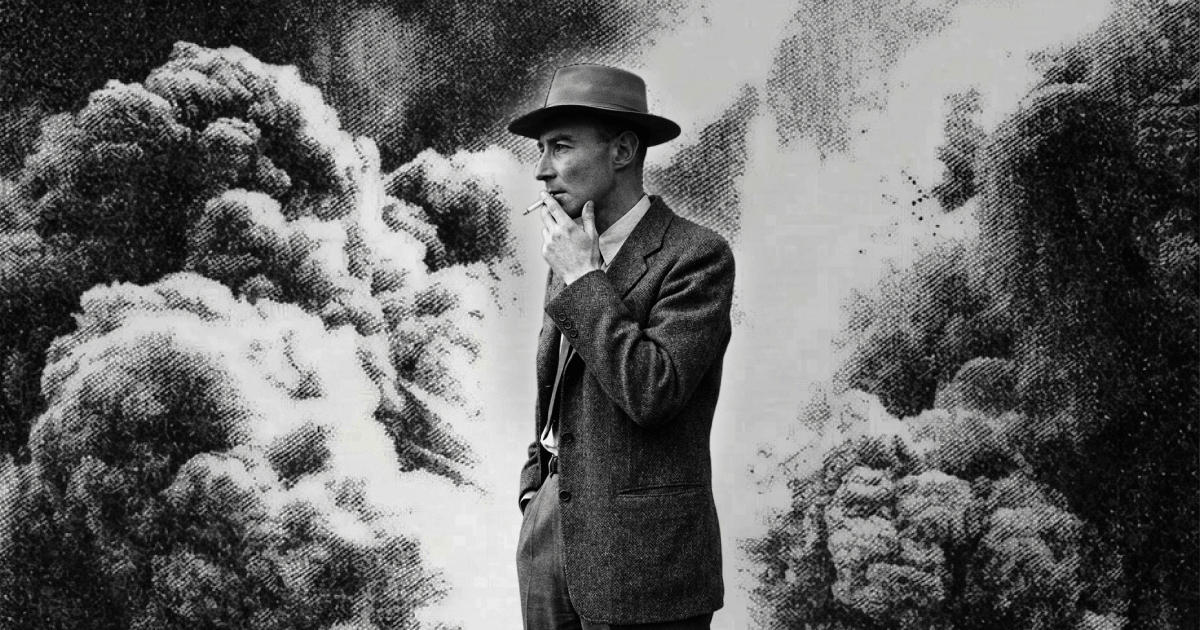

AI's Oppenheimer Moment

TLDR

- If AI is a strategic weapon comparable to nuclear technology, the question of who controls it cannot remain a private business decision.

Key Takeaways

- Torenberg uses the Truman-Oppenheimer meeting as a frame: once a civilization-scale weapon exists, the question of who wields it is unavoidable.

- The “McBombalds” hypothetical reframes Anthropic’s position: a private company holding a nuclear-class technology while negotiating conditional government access is historically unprecedented and politically untenable.

- Dario Acharya’s own framing – that AI is analogous to a nuclear weapon – logically implies it cannot be treated as ordinary private property under normal IP and contract law.

- The piece cites the ongoing American-Israeli conflict in Iran and the Venezuela raid as live examples of AI-enabled military edge, making the governance question immediate rather than theoretical.

- Torenberg does not argue the U.S. government is the ideal steward, only that critics of government AI access must name who deserves that power instead.

Why It Matters

- The Anthropic-government dispute is the first high-profile test of whether private AI labs can unilaterally set terms on military access to transformative technology.

- Accepting AI’s existential stakes in foreign policy (blocking Iran nukes) while treating domestic AI control as a property dispute is a logical inconsistency that this essay directly names.

- The argument has direct implications for any frontier lab: if you publicly claim your model is civilization-scale, you are implicitly consenting to a national-security governance frame.

Erik Torenberg, Andreessen Horowitz · 2026-03-16 · Read the original