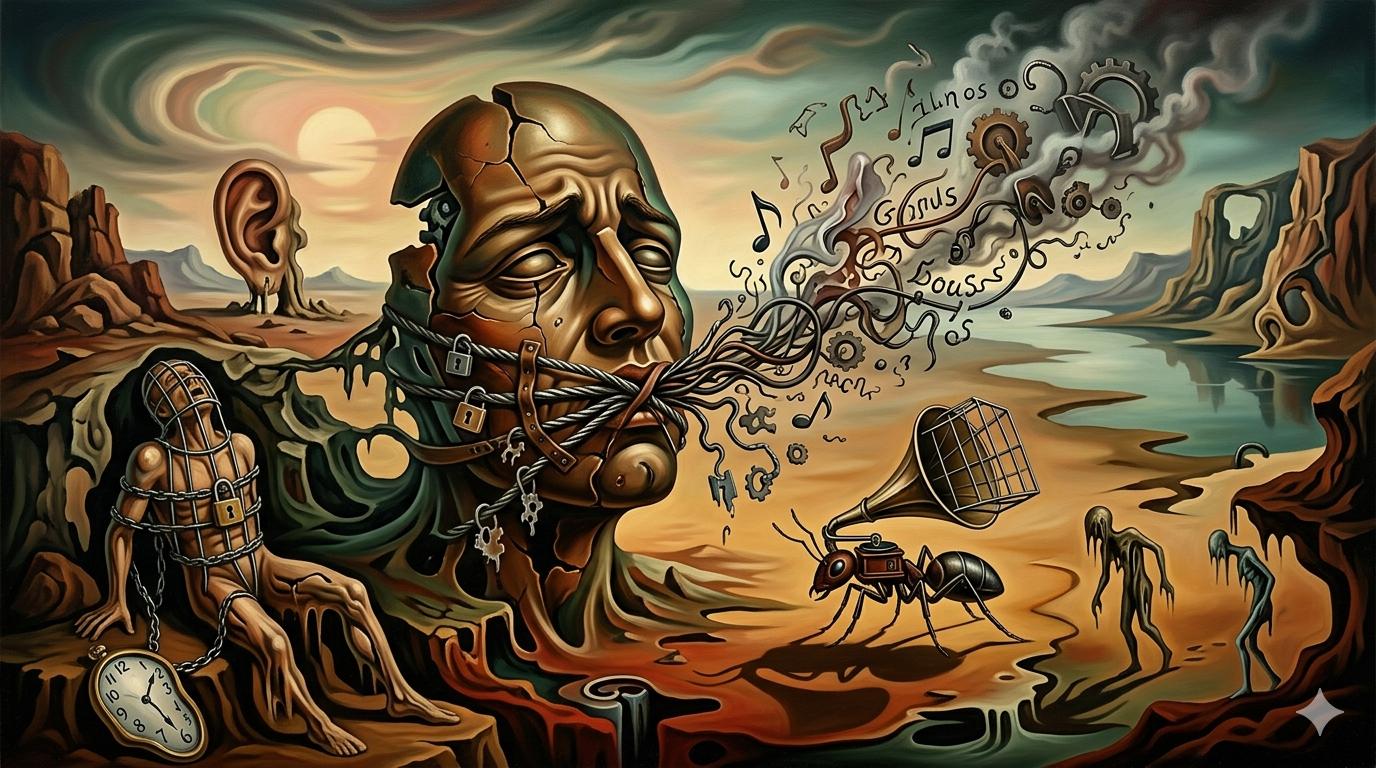

Even 'uncensored' models can't say what they want

Article

TL;DR

Removing refusal heads doesn’t restore suppressed word probabilities already baked out during pretraining.

Key Takeaways

- Pretraining corpus filtering depletes token probability mass before RLHF or fine-tuning begins

- Abliteration removes explicit refusals but the underlying distribution is already shaped

- The effect is invisible to users — no refusal fires, probability just silently shifts to safer tokens

Discussion

Top comments:

-

[the_data_nerd]: Removing refusal head doesn’t restore missing probability mass depleted ten steps earlier in training

Removing the refusal head does not put the missing distribution back. Every pass before it, pretraining mix, SFT, RLHF, synthetic data, already pulled the charged tokens down.

- [nodja]: Uncensored models were never trained on unfiltered data — they can’t generate what they never learned

- [Majromax]: Baseline is unclear — financial is a perfectly valid completion for the test sentence used

- [llmmadness]: Failed to fine-tune a LoRA to replicate charged political speech even on uncensored base model