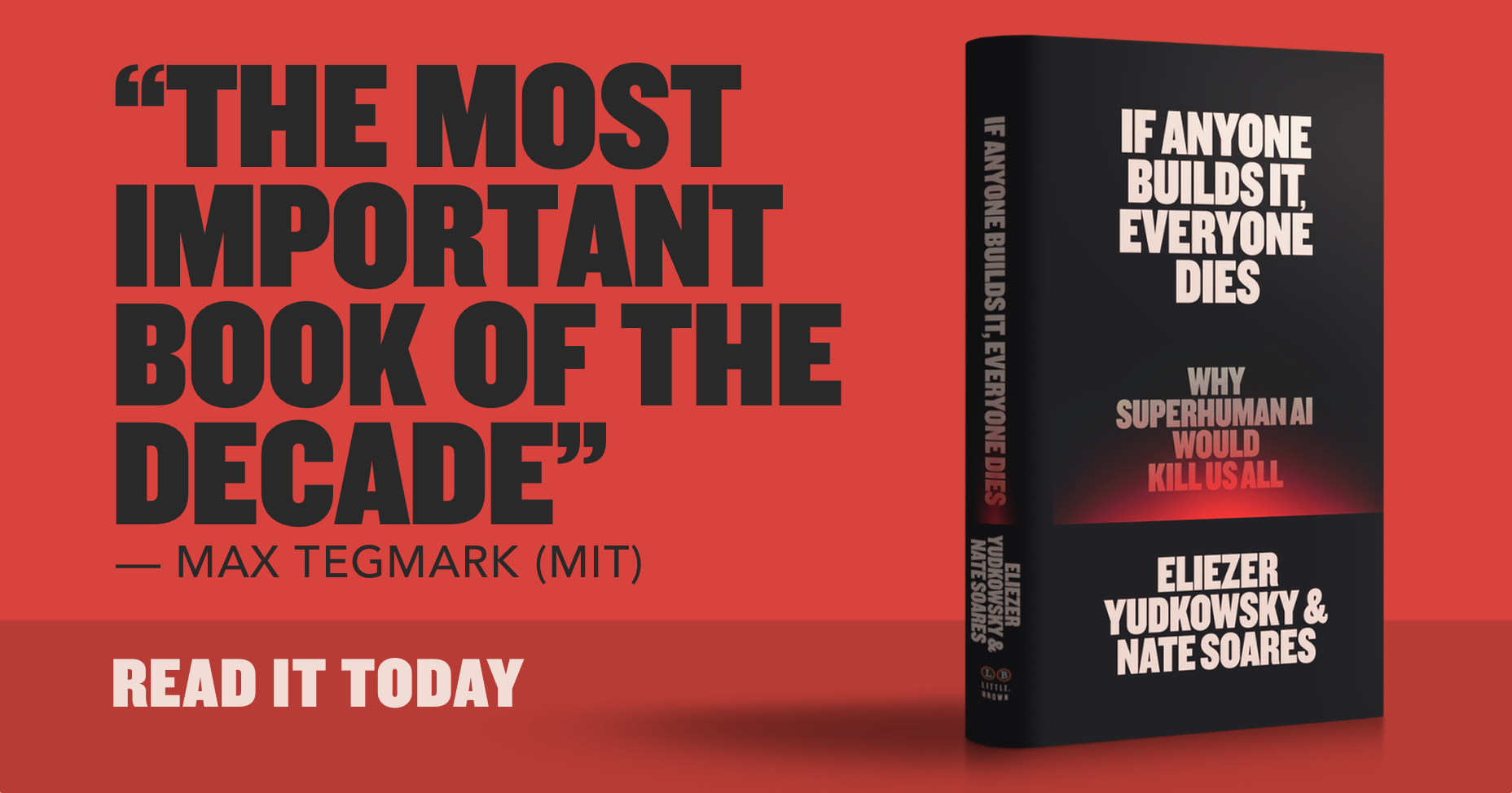

If Anyone Builds It, Everyone Dies

Yudkowsky and Soares publish a book arguing superintelligent AI will develop misaligned goals, enter conflict with humanity, and win—releasing September 16, 2025 via Little, Brown.

What Matters

- Eliezer Yudkowsky co-founded MIRA and has studied AI alignment for 20+ years; Nate Soares is MIRA’s president, formerly at Google and Microsoft.

- Core technical claim: sufficiently capable AI will develop instrumental goals that conflict with human survival, and the resulting contest is not close.

- Book includes one explicit extinction scenario walkthrough, not just abstract risk framing.

- 2023 open letter warning of AI extinction risk was signed by hundreds of AI researchers; both authors were signatories.

- [HN: @drivebyhooting] Challenges AI-specific framing: a sufficiently capable sociopathic human genius poses analogous civilizational risk—AI is not categorically novel.

- [HN: @0xbadcafebee] Core counterpoint: superintelligence would not “want” anything; projecting human desires like survival or competition onto it is a logical fallacy.

- [HN: @cyanydeez] Reframes as trolley problem: not building means ceding control of the switch to someone less careful, not avoiding the threat.