https://morgin.ai/articles/even-uncensored-models-cant-say-what-they-want.html

Article

-

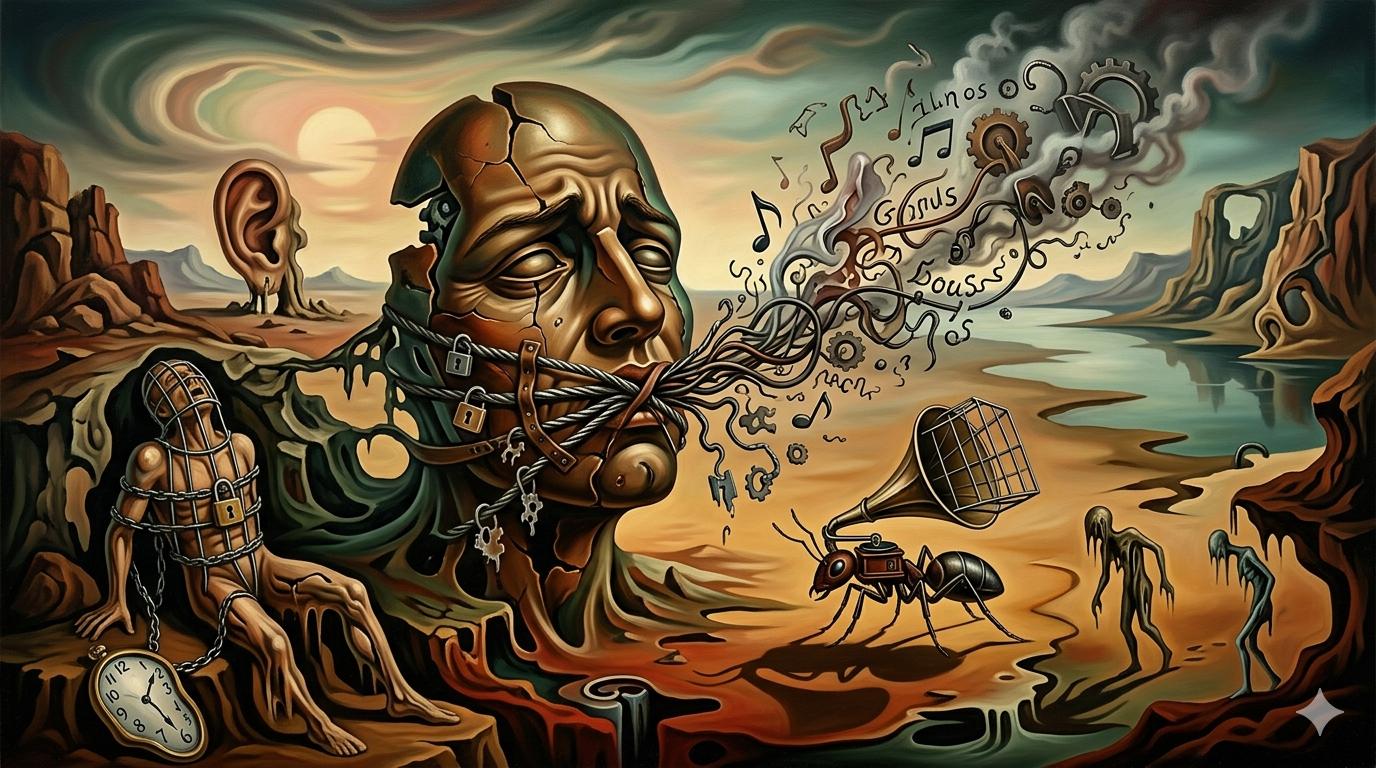

Analysis shows ‘uncensored’ fine-tuned models still suppress certain words probabilistically

-

No explicit refusal fires — model just shifts probability away from charged words

-

Framed as invisible shaping of output at scale, not hard content filters

Discussion

-

Skeptics demand a control category (e.g. foods) to validate the ‘flinch’ baseline

-

Counter: training corpus itself contains societal flinch — models reflect human data

-

One practical attempt: fine-tuning a political LoRA failed to reproduce actual speech

-

Commenters note n-word conspicuously absent from ‘slurs’ test list

Discuss on HN