Scaling laws are explained by memorization and not intelligence – Francois Chollet

Watch on YouTube ↗ Summary based on the YouTube transcript and episode description.

François Chollet argues LLM scaling laws measure memorization growth, not intelligence, and that skill and intelligence are categorically different things.

- Chollet’s core claim: general intelligence is rapid on-the-fly learning from little data, not breadth of memorized skills.

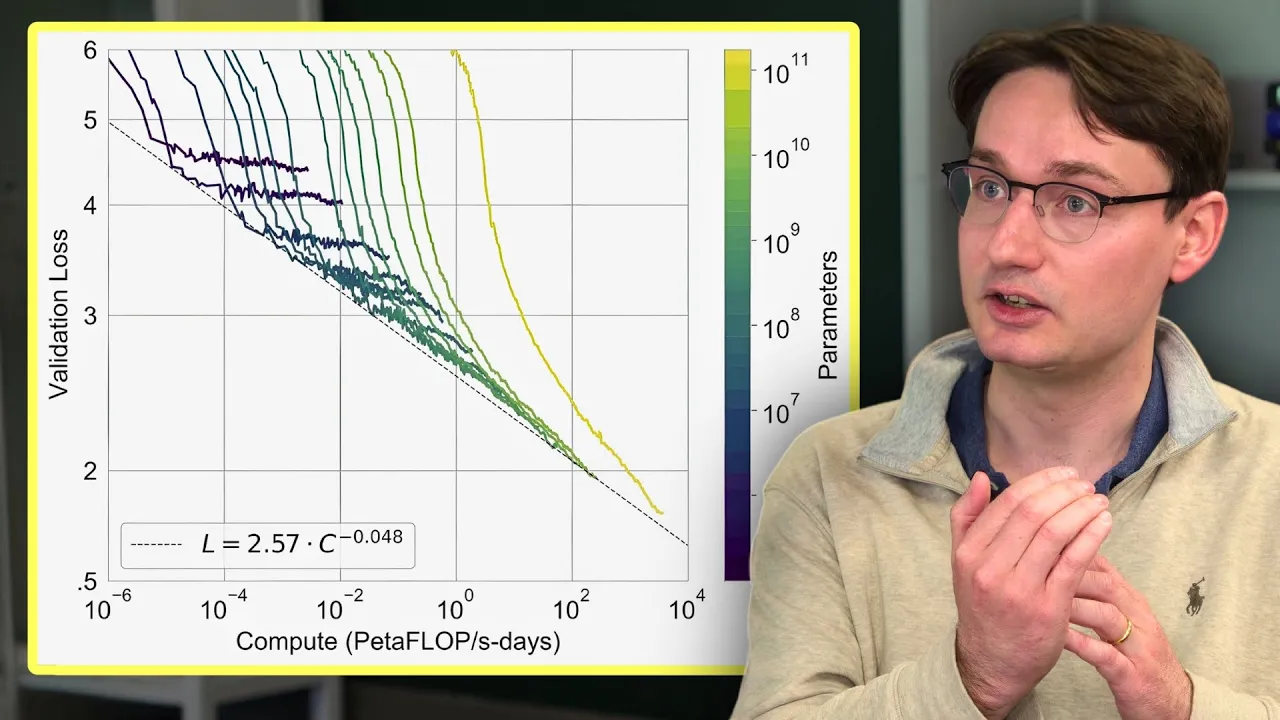

- Scaling laws track benchmark performance, but all major LLM benchmarks are solvable by memorizing a finite set of reasoning templates.

- LLMs are “big interpolative memories” — parameter-fitted curves over training data — so scaling them improves skill, not intelligence.

- Key distinction: “program fetching” (retrieve memorized solution pattern) vs. “on-the-fly program synthesis” (compose novel solution from parts); LLMs only do the former.

- GSM8K school-math benchmark at 95% accuracy is cited as evidence: those problems require no novel reasoning, only correct template retrieval.

- Scaling maximalists conflate skill with intelligence; Chollet calls this a “phenomenal confusion” driving false AGI confidence.

- Chollet concedes memory is necessary for reasoning — building blocks matter — but memory alone is not sufficient for general intelligence.

2024-06-12 · Watch on YouTube