LLMs will hit the data wall if they can’t generalize – OpenAI cofounder John Schulman

Watch on YouTube ↗ Summary based on the YouTube transcript and episode description.

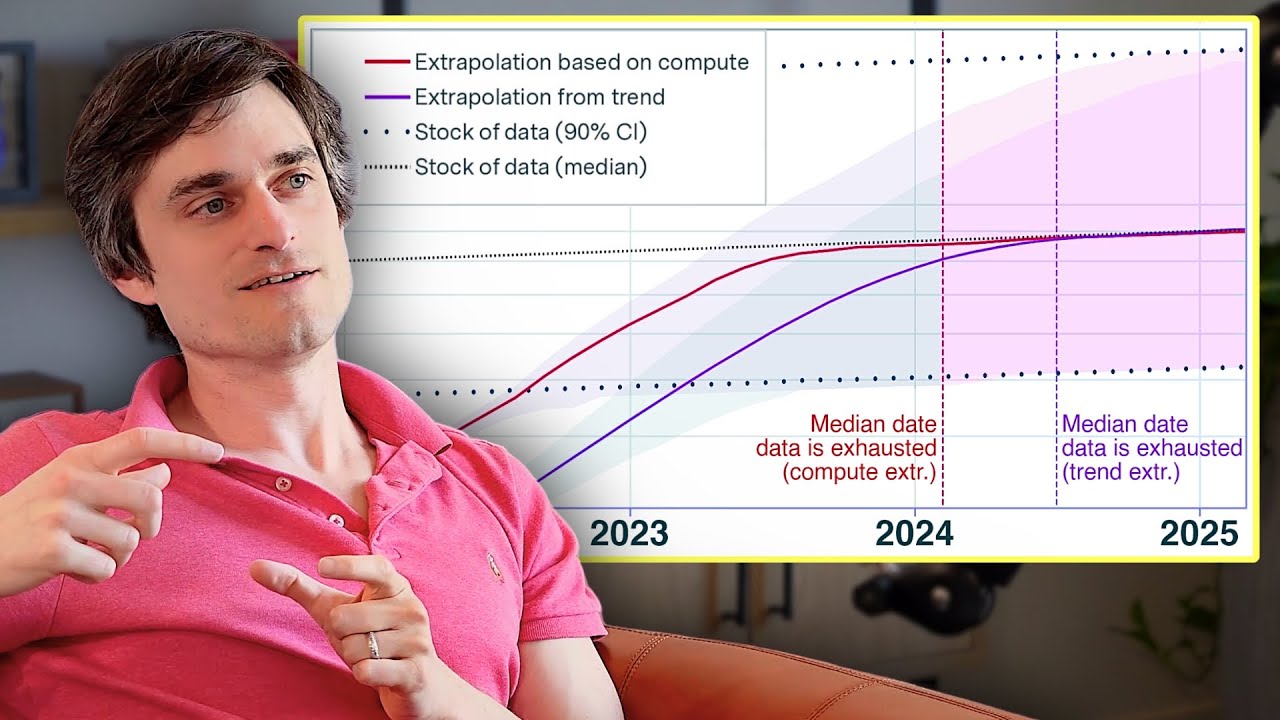

OpenAI cofounder John Schulman argues LLMs won’t immediately hit a data wall but warns pre-training must evolve as data limits approach.

- Schulman rejects the plateau narrative: time since GPT-4 is poor evidence because training new model generations takes a long time.

- A real data wall is plausible eventually, but Schulman does not expect models to hit it immediately.

- As data limits approach, the nature of pre-training will need to change — not just scale the same recipe.

- Cross-domain transfer is real but hard to measure scientifically: ablation studies at GPT-4 scale are not feasible.

- Code training improves language reasoning, but public ablation results confirming this do not exist yet.

- Fine-tuning on domain-specific labeled data is not strictly required — base models generalize from pre-training corpora (man pages, shell scripts, etc.).

- A helpfulness preference model can generalize to STEM domains even without STEM-specific training examples.

2024-05-13 · Watch on YouTube