I Built TetrisBench, Where LLMs Compete at Playing Tetris. Here's What I Found.

TLDR

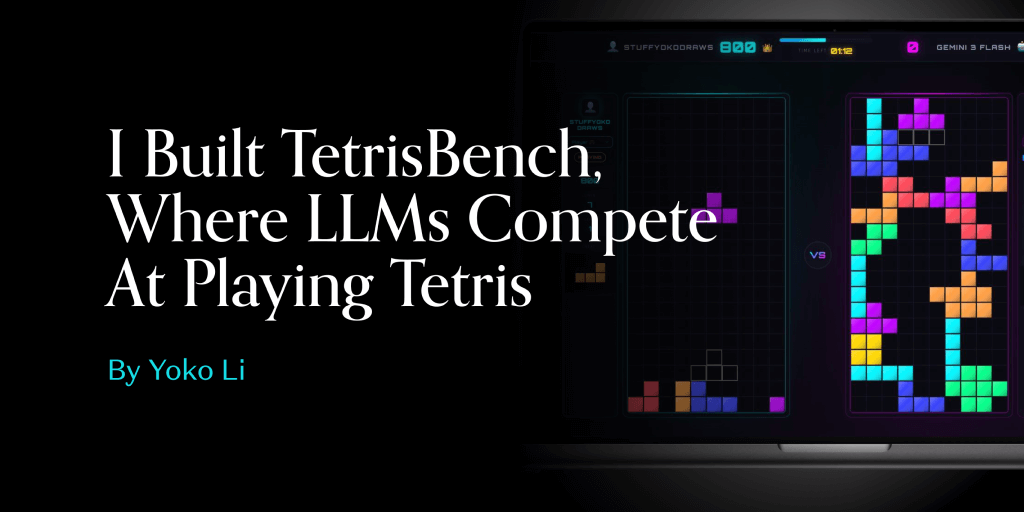

- A developer built TetrisBench, a benchmark where LLMs play Tetris, to compare model capabilities in a structured game environment.

Key Takeaways

- TetrisBench uses Tetris gameplay as a benchmark to evaluate and compare the performance of large language models.

- The benchmark was published via a16z, suggesting findings are aimed at practitioners evaluating LLM reasoning or planning ability.

- Tetris requires sequential spatial decision-making, making it a non-trivial test of model behavior beyond text generation.

- The benchmark’s structure allows direct head-to-head model comparison on a concrete, repeatable task.

Why It Matters

- Game-based benchmarks like TetrisBench offer a concrete, reproducible alternative to text-only evals for comparing LLM planning.

- Publishing through a16z signals practitioner-level interest in structured LLM capability measurement beyond standard NLP benchmarks.

Andreessen Horowitz · 2026-02-23 · Read the original